Viral images created by artificial intelligence have become a prominent phenomenon in modern digital culture. Such visuals gather millions of views, launch waves of discussions, and form new memes significantly faster than traditional content. AI-generated images operate at the intersection of novelty, emotionality, and social platform algorithms. Why? Let's discuss in more detail.

Artificial intelligence has changed not only the way images are created but also the very nature of virality. If previously creating a visual capable of reaching millions of people required a budget, a team, and production, today a correctly formulated request to a generator is sufficient. AI has transformed the creator into a mini-studio, and the speed of production into a competitive advantage.

However, the mere fact of generation does not guarantee reach. Most AI images remain unnoticed. Only those that hit a trigger point become viral: cultural, emotional, or informational. This could be a relevant political event, a recognizable pop culture character, a nostalgic style, or intentional absurdity that easily turns into a meme.

Another important feature is algorithmic amplification. Social platforms promote content that elicits a quick reaction: comments, saves, reposts. AI images often provoke precisely this reaction, forcing users to stop and ask: "Is this real?" or "How was this created?". Doubt, admiration, or outrage become triggers for interaction.

At the same time, the virality of AI visuals is often based on the "hyperrealistic fiction" effect: when an image looks plausible but depicts the impossible. This gap between reality and fantasy creates cognitive dissonance that people want to discuss and share.

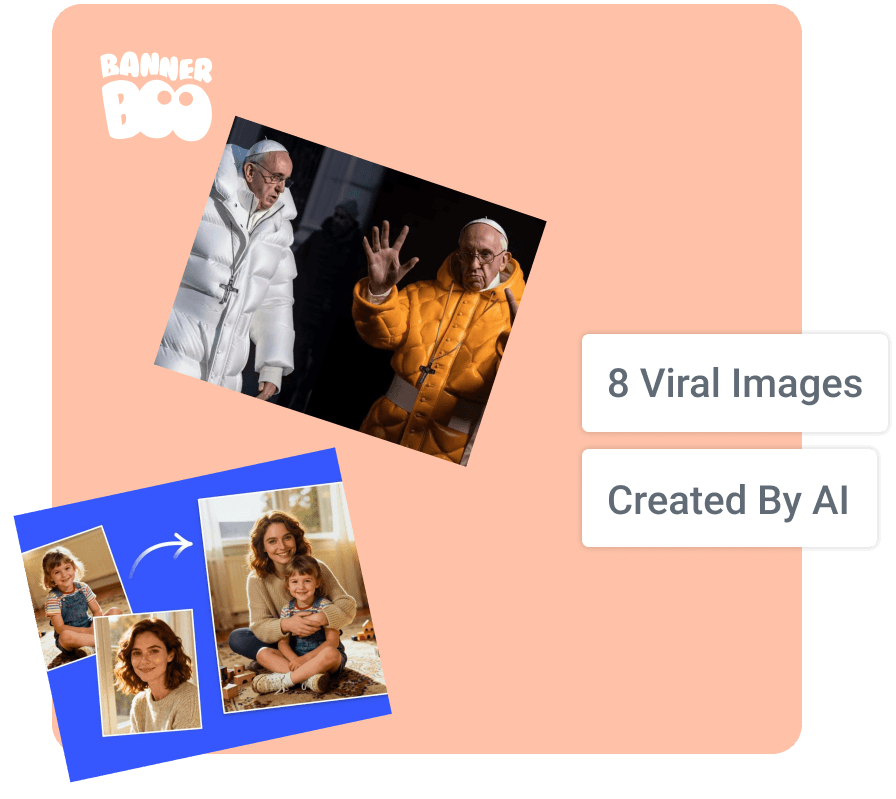

Let's look at specific examples of AI-generated images that went viral and analyze which mechanics worked in each case.

The virality of AI images is almost never accidental. Behind every loud case lies a combination of context, an emotional trigger, and the algorithmic logic of the platform. Some of them became memes, some became subjects of public discussion, and some even influenced the media agenda. Below: eight examples demonstrating different scenarios of how AI images that went viral gain mass reach.

What it is: A photorealistic AI image depicting Francis in a trendy white Balenciaga-style puffer jacket.

Where it went viral: Twitter (now X), later Instagram and Reddit.

Why: Strong contrast between a sacred image and fashion aesthetics (novelty), slight absurdity, photorealism that raised doubts about authenticity (emotional trigger). One of the most discussed images.

Distribution mechanics: The first posts of the image were quickly picked up by meme accounts and fashion communities; users actively reposted it with the question "is this real or not?", which created a mass discussion effect and accelerated virality.

What it is: A series of AI images showing the alleged arrest of Donald Trump.

Where it went viral: Twitter/X and news media.

Why: Political relevance (context), hyperrealism that created an illusion of plausibility, and the "what if it's true?" effect (emotion + informational resonance).

Distribution mechanics: Images were spread through political accounts and news pages; they were massively retweeted by users discussing the possibility of a real event.

What it is: Absurd AI creations with pseudo-Italian names that quickly turned into a meme series.

Where it went viral: TikTok and Instagram Reels.

Why: Pure absurdity, ease of remixing (memetic quality), seriality picked up by thousands of users.

Distribution mechanics: Users created their own variations of the characters, turning the trend into an endless meme chain with thousands of remixes.

What it is: Users created AI images where they hug their childhood self.

Where it went viral: Instagram (Reels, Stories).

Why: Nostalgia (emotion), personalization, simple repetitive format (seriality).

Distribution mechanics: Users massively replicated the format, publishing their own versions and tagging friends, which stimulated a chain reaction of content.

What it is: Images created in the aesthetic of Studio Ghibli.

Where it went viral: TikTok, Instagram, X.

Why: Nostalgia, recognizable style, emotional warmth, and a mass desire to "redraw" oneself in a familiar universe.

Distribution mechanics: Users massively generated their own portraits in anime style and shared the results, creating a wave of user-generated content.

What it is: A realistic image of an explosion near the Pentagon building, created by artificial intelligence and stylized as a photo from a news report.

Where it went viral: X, where the image quickly spread through accounts that publish news.

Why: Effect of urgent news (context), high plausibility of the image, shock factor, and rapid spread through informational accounts.

Distribution mechanics: News aggregators and "breaking news" accounts picked up the image, after which it instantly spread through retweets.

What it is: A realistic photo of Katy Perry, generated by artificial intelligence at the Met Gala 2024, went viral, despite the pop star missing the main event of the year.

Where it went viral: Instagram and X during the Met Gala.

Why: Current event (context), plausibility, "exclusive shot" effect (novelty).

Distribution mechanics: Users shared the photo as if it were a "red carpet leak," which amplified interest in the image.

What it is: Realistic images of space, astronauts, and galaxies, created by artificial intelligence and stylized as official photos from space missions or telescopes. Many users perceived them as real photos from space programs.

Where it went viral: Instagram, Reddit, and X in communities dedicated to space and science.

Why: Visual plausibility, association with the authority of space agencies, "rare space shot" effect (novelty).

Distribution mechanics: Images were actively reposted in scientific and space communities, where users shared them as "unique shots," which accelerated their virality.

All these viral AI images are united not by technology as such, but by a trigger: a strong emotion, cultural context, or absurdity that is easily scalable into a meme. Virality appears where plausibility and unexpectedness combine. This balance is what triggers algorithms and mass distribution.

Virality does not mean copying. Most AI images that went viral did so not because of the style itself, but because of the idea, context, and presentation. The effect can be replicated, but not by reproducing someone else's plot, but by using the same mechanics: contrast, emotion, absurdity, relevance, and the correct format for the platform.

Here are three key principles.

Don't copy the visual – copy the logic.

If an image went viral due to contrast (e.g., sacred + fashion), don't recreate the same image. Find another cultural or social contrast in your niche.

What needs to be done?

Virality is born where there is interpretation, not a copy.

Most viral AI images turned into a series. After all, one successful visual is an impulse, and a series is scaling.

Why does this work?

What needs to be done?

A series forms not just a viral post, but a microtrend.

The same AI visual will work differently depending on the platform.

What is important for TikTok / Reels?

What is important for Instagram?

What is important for X (Twitter)?

What is important for LinkedIn?

Virality often depends not on the picture, but on how it is packaged.

Key idea: to replicate the success of viral AI images, you don't need to copy a specific image. You need to reproduce the mechanics: contrast or emotion, then seriality, then adaptation for platforms, and finally – audience engagement.

AI is just a tool. Strategy creates virality.

Virality does not absolve responsibility. AI images can scale quickly, but along with reach, reputational, legal, and ethical risks also grow.

What should be considered?

If an image is created by artificial intelligence, it must be clear to the audience. Labels such as "created with AI" or "AI-generated" reduce the risk of misleading and increase trust.

Using images of public figures without permission can lead to legal consequences, especially if the content is misleading or harms reputation. Deepfake content is a high-risk area.

Imitating the recognizable style of a particular artist, studio, or brand can raise questions about intellectual property. It is safer to create your own interpretation of a style rather than copying a specific visual signature.

Photorealistic AI images that simulate events that did not happen can spread misinformation. Extreme caution should be exercised when dealing with topics of politics, disasters, military events, and socially sensitive topics.

Social networks are gradually implementing requirements for labeling AI content. Violations can lead to restricted reach or account blocking.

Main principle: if an AI image can be perceived as a real fact, it needs to be labeled. If it can cause harm, it should not be published. Virality is short-term, but audience trust is a strategic asset.

Viral images created by artificial intelligence are not just a technological phenomenon, but a marker of change in the entire content ecosystem. AI has radically lowered the barrier to visual production, but at the same time increased the demands on the idea. When everyone can create an "impressive picture," the concept wins, not the tool.

The analysis of cases shows a pattern: virality arises at the intersection of three factors: a strong trigger (emotion, absurdity, fear, nostalgia), relevant context (news, pop culture, social topics), and algorithmic amplification of the platform. If at least one element is missing, scaling does not occur. That is why most AI images remain unnoticed, and only a few become cultural events.

Another important point is the effect of "plausible fiction." People tend to interact with content that looks realistic but depicts something unexpected or impossible. This cognitive gap makes them stop, look closely, comment, and share. In the digital environment, such a reaction triggers algorithms.

At the same time, virality is no longer purely a creative achievement; it can become dangerous. Therefore, the strategy for working with AI visuals should include not only creative technique but also an ethical framework and an understanding of legal risks.

So, artificial intelligence does not create virality automatically; it only accelerates production. And creative ideas always become popular!